LangGraph 是一个用于构建有状态 LLM 应用的框架,非常适合构建 ReAct(推理和行动)代理。

ReAct 代理将 LLM 推理与行动执行相结合。它们会迭代式地思考、使用工具并根据观察结果采取行动,以实现用户目标,并动态调整其方法。此模式在 2023 年的《ReAct:在语言模型中协同推理和行动》一文中首次提出,旨在模仿人类灵活的问题解决方式,而不是僵化的工作流程。

LangGraph 提供了一个预构建的 ReAct 代理 (

create_react_agent),当您需要对 ReAct 实现进行更多控制和自定义时,该代理会大放异彩。本指南将向您展示简化版。

LangGraph 使用以下三个关键组件将代理建模为图:

State:表示应用当前快照的共享数据结构(通常为TypedDict或Pydantic BaseModel)。Nodes:对代理的逻辑进行编码。它们接收当前状态作为输入,执行一些计算或副作用,并返回更新后的状态,例如 LLM 调用或工具调用。Edges:根据当前的State定义要执行的下一个Node,从而实现条件逻辑和固定过渡。

如果您还没有 API 密钥,可以从 Google AI Studio 获取一个。

pip install langgraph langchain-google-genai geopy requests

在环境变量 GEMINI_API_KEY 中设置 API 密钥。

import os

# Read your API key from the environment variable or set it manually

api_key = os.getenv("GEMINI_API_KEY")

为了更好地了解如何使用 LangGraph 实现 ReAct 代理,本指南将介绍一个实际示例。您将创建一个代理,其目标是使用工具查找指定位置的当前天气。

对于此天气代理,State 将维护正在进行的对话历史记录(作为消息列表)和一个计数器(作为整数),用于说明已采取的步骤数。

LangGraph 提供了一个用于更新状态消息列表的辅助函数 add_messages。它充当 reducer,接受当前列表和新消息,并返回合并后的列表。它通过消息 ID 处理更新,并默认为新消息和未读消息采用“仅附加”行为。

from typing import Annotated,Sequence, TypedDict

from langchain_core.messages import BaseMessage

from langgraph.graph.message import add_messages # helper function to add messages to the state

class AgentState(TypedDict):

"""The state of the agent."""

messages: Annotated[Sequence[BaseMessage], add_messages]

number_of_steps: int

接下来,定义天气工具。

from langchain_core.tools import tool

from geopy.geocoders import Nominatim

from pydantic import BaseModel, Field

import requests

geolocator = Nominatim(user_agent="weather-app")

class SearchInput(BaseModel):

location:str = Field(description="The city and state, e.g., San Francisco")

date:str = Field(description="the forecasting date for when to get the weather format (yyyy-mm-dd)")

@tool("get_weather_forecast", args_schema=SearchInput, return_direct=True)

def get_weather_forecast(location: str, date: str):

"""Retrieves the weather using Open-Meteo API.

Takes a given location (city) and a date (yyyy-mm-dd).

Returns:

A dict with the time and temperature for each hour.

"""

# Note that Colab may experience rate limiting on this service. If this

# happens, use a machine to which you have exclusive access.

location = geolocator.geocode(location)

if location:

try:

response = requests.get(f"https://api.open-meteo.com/v1/forecast?latitude={location.latitude}&longitude={location.longitude}&hourly=temperature_2m&start_date={date}&end_date={date}")

data = response.json()

return dict(zip(data["hourly"]["time"], data["hourly"]["temperature_2m"]))

except Exception as e:

return {"error": str(e)}

else:

return {"error": "Location not found"}

tools = [get_weather_forecast]

现在,初始化模型并将工具绑定到模型。

from datetime import datetime

from langchain_google_genai import ChatGoogleGenerativeAI

# Create LLM class

llm = ChatGoogleGenerativeAI(

model= "gemini-3-flash-preview",

temperature=1.0,

max_retries=2,

google_api_key=api_key,

)

# Bind tools to the model

model = llm.bind_tools([get_weather_forecast])

# Test the model with tools

res=model.invoke(f"What is the weather in Berlin on {datetime.today()}?")

print(res)

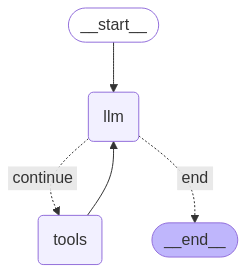

在运行代理之前,最后一步是定义节点和边。 在此示例中,您有两个节点和一条边。

- 执行工具方法的

call_tool节点。LangGraph 有一个为此预构建的节点,称为 ToolNode。 - 使用

model_with_tools调用模型的call_model节点。 should_continue边缘,用于决定是调用工具还是模型。

节点和边的数量不固定。您可以根据需要在图表中添加任意数量的节点和边。例如,您可以添加一个用于添加结构化输出的节点,或者添加一个自我验证/反思节点,以便在调用工具或模型之前检查模型输出。

from langchain_core.messages import ToolMessage

from langchain_core.runnables import RunnableConfig

tools_by_name = {tool.name: tool for tool in tools}

# Define our tool node

def call_tool(state: AgentState):

outputs = []

# Iterate over the tool calls in the last message

for tool_call in state["messages"][-1].tool_calls:

# Get the tool by name

tool_result = tools_by_name[tool_call["name"]].invoke(tool_call["args"])

outputs.append(

ToolMessage(

content=tool_result,

name=tool_call["name"],

tool_call_id=tool_call["id"],

)

)

return {"messages": outputs}

def call_model(

state: AgentState,

config: RunnableConfig,

):

# Invoke the model with the system prompt and the messages

response = model.invoke(state["messages"], config)

# This returns a list, which combines with the existing messages state

# using the add_messages reducer.

return {"messages": [response]}

# Define the conditional edge that determines whether to continue or not

def should_continue(state: AgentState):

messages = state["messages"]

# If the last message is not a tool call, then finish

if not messages[-1].tool_calls:

return "end"

# default to continue

return "continue"

所有代理组件都准备就绪后,您现在可以组装它们了。

from langgraph.graph import StateGraph, END

# Define a new graph with our state

workflow = StateGraph(AgentState)

# 1. Add the nodes

workflow.add_node("llm", call_model)

workflow.add_node("tools", call_tool)

# 2. Set the entrypoint as `agent`, this is the first node called

workflow.set_entry_point("llm")

# 3. Add a conditional edge after the `llm` node is called.

workflow.add_conditional_edges(

# Edge is used after the `llm` node is called.

"llm",

# The function that will determine which node is called next.

should_continue,

# Mapping for where to go next, keys are strings from the function return,

# and the values are other nodes.

# END is a special node marking that the graph is finish.

{

# If `tools`, then we call the tool node.

"continue": "tools",

# Otherwise we finish.

"end": END,

},

)

# 4. Add a normal edge after `tools` is called, `llm` node is called next.

workflow.add_edge("tools", "llm")

# Now we can compile and visualize our graph

graph = workflow.compile()

您可以使用 draw_mermaid_png 方法直观呈现图表。

from IPython.display import Image, display

display(Image(graph.get_graph().draw_mermaid_png()))

现在运行代理。

from datetime import datetime

# Create our initial message dictionary

inputs = {"messages": [("user", f"What is the weather in Berlin on {datetime.today()}?")]}

# call our graph with streaming to see the steps

for state in graph.stream(inputs, stream_mode="values"):

last_message = state["messages"][-1]

last_message.pretty_print()

现在,您可以继续对话,询问其他城市的天气,或请求进行比较。

state["messages"].append(("user", "Would it be warmer in Munich?"))

for state in graph.stream(state, stream_mode="values"):

last_message = state["messages"][-1]

last_message.pretty_print()